Natural Adversarial Examples (NAEs) are samples that naturally arise from the environment (rather than artifically created via pixel perturbation) yet fools the classifier into misclassification. NAEs are valuable for identifying the vulnerability and robustly measuring the performance of a classifier.

Early works collect NAEs by filtering from a huge set of real images. We argue that this is passive and relies on the assumption that NAEs exist in the candidate set in the first place. In this work, we propose to synthesize NAEs using the powerful Stable Diffusion.

See below the method and generated examples by SD-NAE. For more details, please refer to our paper!

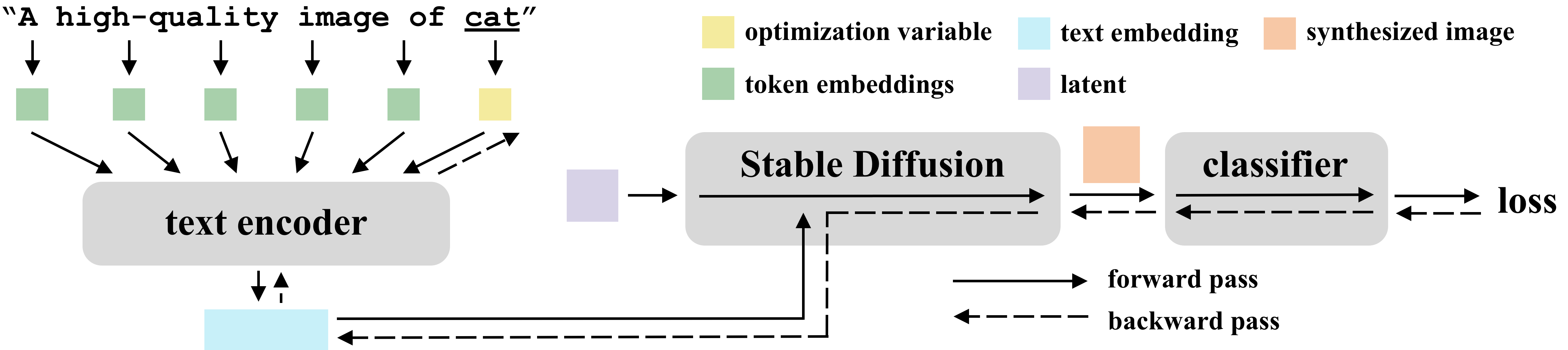

Overview of SD-NAE, which generates natural adversarial examples by optimizing the token embedding of the class-related token. The optimization is guided by the gradient of loss backpropagated from the target classifier

Overview of SD-NAE, which generates natural adversarial examples by optimizing the token embedding of the class-related token. The optimization is guided by the gradient of loss backpropagated from the target classifier

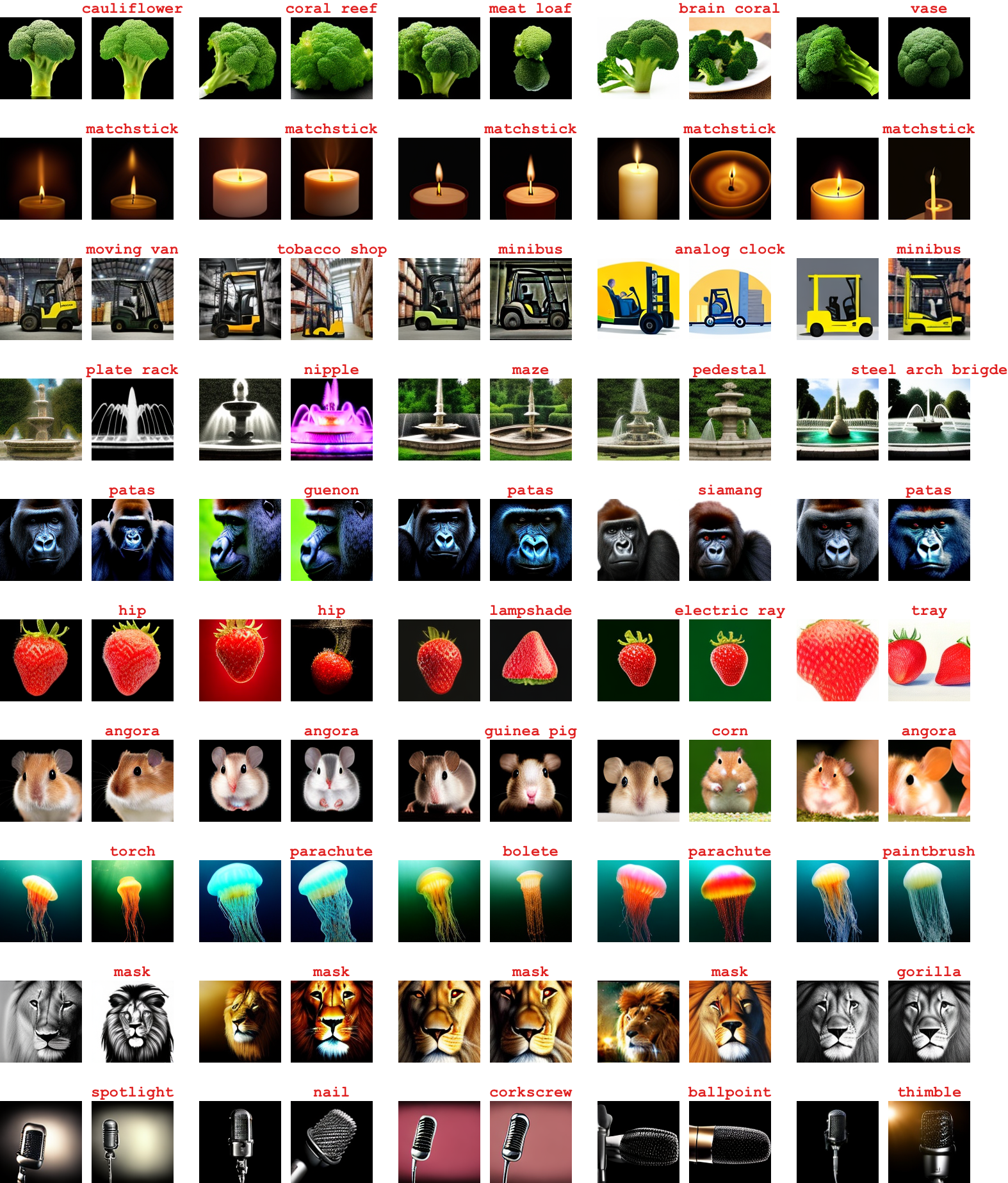

In each pair, the left one is generated with the initialized token embedding. Importantly, we make sure that all left images are correctly classified by the ImageNet ResNet-50 model in the first place. The right ones are the result of SD-NAE optimization when using the corresponding left one as initialization, and we mark the classifier's prediction in red above the image.

In each pair, the left one is generated with the initialized token embedding. Importantly, we make sure that all left images are correctly classified by the ImageNet ResNet-50 model in the first place. The right ones are the result of SD-NAE optimization when using the corresponding left one as initialization, and we mark the classifier's prediction in red above the image.